Anyone who has any idea about Amazon Web Services (AWS) pricing already knows the answer to mining profitability on AWS. Simply, it is not profitable at this time. But that is not why we are here. We are here to review an experiment I recently performed at AWS.

The Experiment

Find the sweet spot for running GPU mining tests in AWS. The sweet spot would combine GPU power with lowest cost. Classic cloud computing formulae.

Setup

I used xmr-stak to mine Leviar coin (XLC). I chose xmr-stak mostly out of familiarity but also because xmr-stak tries to optimize itself on startup when no configs are present. Something I will require in just a bit. Whereas Leviar was a coin that had a low difficulty in the Cryptonote v7 algorithm. I wanted enough hash rate to easily see differences. A heavier algorithm or greater difficulty would reduce hash rate as much as a factor of 10. Plus, I wanted some Leviar and they had a nice pool to see hash rates.

We will need an AWS machine image (AMI) with xmr-stak miner. When I checked the AWS Marketplace I found no such thing [DUH]. I was going to do it myself anyway.

Here is the base I used: How to Setup Ubuntu 18.04 for Coin Mining. I installed xmr-stak using the instructions on xmr-stak Git. The xmr-stak was configured for the Leviar pool. Important, remove any gpu and cpu configs created by xmr-stak. We will need xmr-stak to create new configs based on the EC2 instances we run the AMI on.

The last piece was to make an AMI of the fully functional Ubuntu xmr-stak miner EC2 instance. The AMI will be used to launch EC2 instances that we can test.

Testing

I chose to test p2, p3, g2, and g3 instance types. These are the only instance types that come with a GPU on launch. Also, all the types are NVIDIA and require CUDA for xmr-stak These can be fairly expensive so the goal is to run less than an hour. To keep costs low the instances were launched with spot pricing. All costs will reference the spot prices.

To test, a GPU instance was launched and xmr-stak started via SSH. Data was collected at 60 seconds. The pool settings were the same for all tests. Unfortunately I did not use a static difficulty but by 60 seconds the difficulties were about the same.

Here is an example xmr-stak config used for g2.8xlarge testing:

"cpu_threads_conf" :

[

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 0 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 1 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 2 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 3 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 4 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 5 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 6 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 7 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 8 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 9 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 16 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 17 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 18 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 19 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 20 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 21 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 22 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 23 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 24 },

{ "low_power_mode" : false, "no_prefetch" : true, "affine_to_cpu" : 25 },

],

"gpu_threads_conf" :

[

// gpu: GRID K520 architecture: 30

// memory: 3985/4037 MiB

// smx: 8

{ "index" : 0,

"threads" : 42, "blocks" : 24,

"bfactor" : 0, "bsleep" : 0,

"affine_to_cpu" : false, "sync_mode" : 3,

},

// gpu: GRID K520 architecture: 30

// memory: 3985/4037 MiB

// smx: 8

{ "index" : 1,

"threads" : 42, "blocks" : 24,

"bfactor" : 0, "bsleep" : 0,

"affine_to_cpu" : false, "sync_mode" : 3,

},

// gpu: GRID K520 architecture: 30

// memory: 3985/4037 MiB

// smx: 8

{ "index" : 2,

"threads" : 42, "blocks" : 24,

"bfactor" : 0, "bsleep" : 0,

"affine_to_cpu" : false, "sync_mode" : 3,

},

// gpu: GRID K520 architecture: 30

// memory: 3985/4037 MiB

// smx: 8

{ "index" : 3,

"threads" : 42, "blocks" : 24,

"bfactor" : 0, "bsleep" : 0,

"affine_to_cpu" : false, "sync_mode" : 3,

},

],

Results

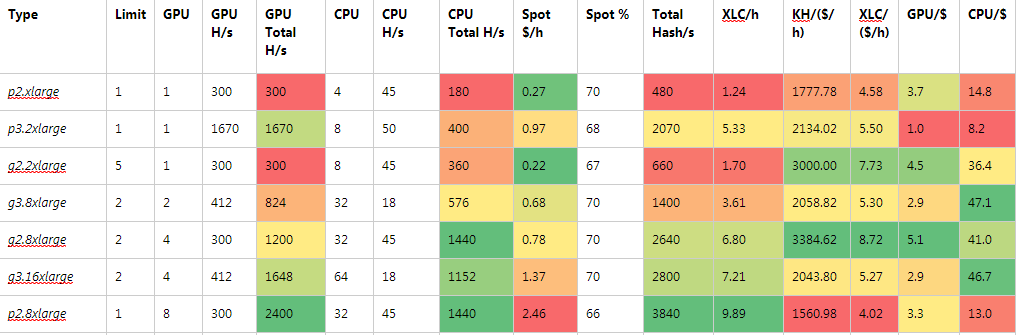

The results of the tests were about what could be predicted. More GPUs meant more hash rate. However, between the 2 series (p2, g2) instances and the 3 series (p3, g3) instances the GPU power were different. The 2 series use NVIDIA K520 GPUs whereas the 3 series uses

NVIDIA Tesla V100 GPUs. In general the hash rate of the 2 series was 300 H/s and the 3 series was 412 H/s per GPU. About a 27% difference in GPU power.

CPU hash rates were counted as part of hash rate. Part of determining profitability would be to include all computing power. As such the g2, p2, and p3 instance types were within 10% difference in CPU power. Surprisingly, the g3 instances had low CPU hash rates and were over 60% less computing power.

Factoring compute power together with instance cost we get Table 1. The heat map shows favorable areas as green decreasing to red. The highest hash rate also belongs to the highest cost instance. The p2.8xlarge instance type had the highest GPU at 2400 H/s and tied for highest CPU hash rates at 1440 H/s. It therefore had the highest hash rate at 3840 H/s. With a whopping cost of $2.46/ hour.

The lowest hash rate belonged to the smallest instance, p2.8xlarge. It had a GPU of 300 H/s and CPU of 180 H/s. Although it also was not the cheapest instance at $0.27.

Knowing the highest and lowest performing instance types we will now look at hashing power per dollar spent per hour. This will determine our sweet spot of best spent dollars for the hash rate. Larger numbers are better. Our highest performer clocks in at 1560 kH/$/h. Basically, the cost is halving the hash rate per hour. The lowest performer ran at 1778 kH/$/h. In these cases the highest performer and lowest performer by hash rate scored lowest in hash compared to cost per hour.

Time now for the sweet spot. In Table 1, the best hash rate cost per hour goes to the g2.8xlarge instance type. The 4 GPUs and large number of CPUs with a sub dollar cost clinched the sweet spot title. At 3385 kH/$/h it is the best spent money to hash rate.

Or is it? The g2.2xlarge costs less than a third of the g2.8xlarge. This means 3 g2.8xlarge could run for less than one g2.8xlarge. Running 3 more instances would triple the hash rate but only to 1960 H/s. Still well under g2.8xlarge and placing it more towards the g3.8xlarge cost performance. The g2.8xlarge holds the sweet spot title.

We found the sweet spot, so how much can we make? The g2.8xlarge instance type can produce 8.72 XLC/($/h). Which means if I normalize to one dollar per hour I get about 8.72 Leviar coin. Leviar was about a penny a coin at the time of the test. So, for every dollar spent on running the instance we would get back 8-9 cents per hour. Not a good return even with bad math skills. In fact, to break even, Leviar (XLC) would need to be more like 10 cents per coin and cover most costs at 87 cents an hour. The issue is, when 10 cents a coin happens what is the difficulty and how would it effect hash rate?

Conclusion

Currently mining Leviar coin in AWS is not profitable. This is not to say a break even point could not be reached. However, if you are looking at break even you may just want to purchase the coin. If you are like me and think cryptocoins will see their day then mining may be worth while.

Certainly for testing purposes AWS is cost effective. I cannot afford a large rig and so can test my ideas in AWS in under an hour for less then a dollar.

And now some interesting math. At one dollar an hour you would spend $720 in 30 days and would mine 6,480 XLC. I bet you could buy a pretty descent GPU for $720. The point, it all depends on where you want to spend those dollars and if you want coins to HODL.

Hopefully you found this useful. Throw us a few coins to say thanks.