This article will continue along the same path as previous articles looking at CPU and GPU platforms in Amazon Web Services (AWS) for mining and their profitability. Specifically, we will be experimenting with AWS’ ARM instances.

ARM is the company producing the Reduced Instruction Set Computing (RISC) processors. So, smaller more atomic instruction sets. The current interest in RISC is in containerized computing like Docker. The best reason is ARM are cheap and can be used to scale the CPU needed for much less than Intel CPU.

The Experiment

The experiment is multifaceted. Can an ARM image be built for cryptomining, what hash rate can be produced by an ARM CPU, and what is the cost.

Setup – ARM’ing Up

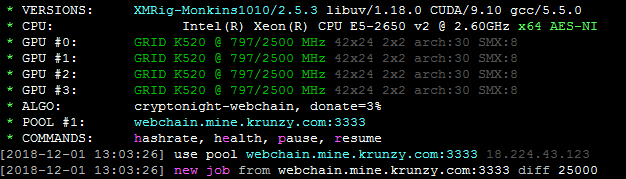

At Krunzy.com we have Webchain (CryptoNight v7) and Swap (CryptoNight SuperFast) mining pools available. Just so happens these will be good picks as they have a low difficulty so we may be able to produce some rewards. We also have full control of the pools so we can reduce anomalies in testing.

First, we need an ARM platform. I will be using Ubuntu Server 18.04 LTS HVM for ARM. I will make an assumption that not only can you install Ubuntu but also get it to a base configuration which is covered in a previous article.

For each miner build I will briefly describe the build then provide all the commands.

Webchain Miner ARM

There are not many Webchain miners so we chose the webchain-miner from Webchain’s GitHub. With minimal effort we compiled a working ARM miner. The following steps should provide a working miner.

sudo apt install libmicrohttpd-dev libssl-dev cmake build-essential git libuv1-dev

#GCC 7.1

sudo add-apt-repository ppa:jonathonf/gcc-7.1

sudo apt-get update

sudo apt-get install gcc-7 g++-7

#webchain-miner

git clone https://github.com/webchain-network/webchain-miner.git

mkdir webchain-miner/build

cd webchain-miner/build

cmake .. -DCMAKE_C_COMPILER=gcc-7 -DCMAKE_CXX_COMPILER=g++-7

make

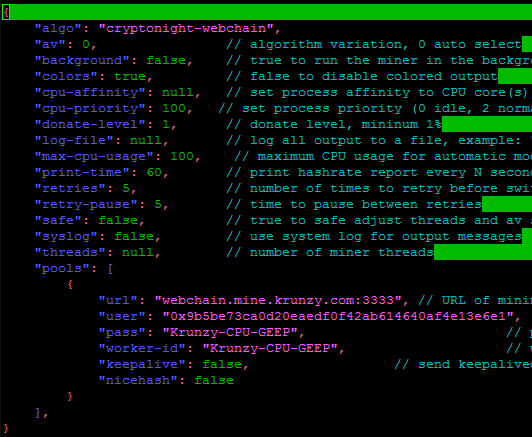

In the same directory as the miner (webchain-miner/build) add or update the json.config to the following (feel free to change address, etc.):

{

"algo": "cryptonight-webchain",

"av": 0, // algorithm variation, 0 auto select

"background": false, // true to run the miner in the background

"colors": true, // false to disable colored output

"cpu-affinity": null, // set process affinity to CPU core(s), mask "0x3" for cores 0 and 1

"cpu-priority": 5, // set process priority (0 idle, 2 normal to 5 highest)

"donate-level": 0, // donate level, mininum 1%

"log-file": null, // log all output to a file, example: "c:/some/path/webchain-miner.log"

"max-cpu-usage": 100, // maximum CPU usage for automatic mode, usually limiting factor is CPU cache not this opt$

"print-time": 60, // print hashrate report every N seconds

"retries": 5, // number of times to retry before switch to backup server

"retry-pause": 5, // time to pause between retries

"safe": false, // true to safe adjust threads and av settings for current CPU

"syslog": false, // use system log for output messages

"threads":

[

{"low_power_mode": 2, "affine_to_cpu": 0},

{"low_power_mode": 2, "affine_to_cpu": 1},

{"low_power_mode": 2, "affine_to_cpu": 2},

]

, // number of miner threads

"pools": [

{

"url": "webchain.mine.krunzy.com:1111", // URL of mining server

"user": "0xd9b36d8e0ff81da377620239b40de478d0f5a62b", // username for mining server put your wallet addres$

"pass": "x", // password for mining server

"worker-id": "ARM", // worker ID for mining server no spaces

"keepalive": false, // send keepalived for prevent timeout (need pool support)

"nicehash": false

}

],

}

To run the miner go to the directory webchain-miner/build then the following command:

./webchain-miner

To auto run the miner on machine startup add the following to /etc/rc.local (adjust for your paths):

#!/bin/sh -e

cd /home/ubuntu/webchain-miner/build

./webchain-miner

exit 0

Swap Miner ARM

Swap miners have a few choices. There is xmr-stak and flavors of xmrig. We gave xmr-stak a try but it is not able to compile to ARM. We looked at xmrig and landed on xmrigCC as the miner for Swap ARM. It took a little effort to compile the ARM miner to build. Mostly around Boost and the cmake options. The following steps should be done in order for best results.

sudo apt install libmicrohttpd-dev libssl-dev cmake build-essential git libuv1-dev

#GCC 7.1

sudo add-apt-repository ppa:jonathonf/gcc-7.1

sudo apt-get update

sudo apt-get install gcc-7 g++-7

#BOOST (used XMRIGCC folder)

wget https://dl.bintray.com/boostorg/release/1.67.0/source/boost_1_67_0.tar.bz2

tar xvfj boost_1_67_0.tar.bz2

cd boost_1_67_0

./bootstrap.sh --with-libraries=system

./b2 --toolset=gcc-7

build boost static libs:

./b2 link=static runtime-link=static install --toolset=gcc-7

#XMRIG

sudo add-apt-repository ppa:jonathonf/gcc-7.1

sudo apt-get update

sudo apt-get install gcc-7 g++-7

git clone https://github.com/Bendr0id/xmrigCC.git

cd xmrigCC

cmake . -DCMAKE_C_COMPILER=gcc-7 -DCMAKE_CXX_COMPILER=g++-7 -DWITH_CC_SERVER=OFF -DWITH_TLS=OFF -DWITH_HTTPD=OFF -DBOOST_ROOT=~/xmrigCC/boost_1_67_0

make

In the same directory as the miner (xmridCC) add or update the json.config to the following (feel free to change address, etc.):

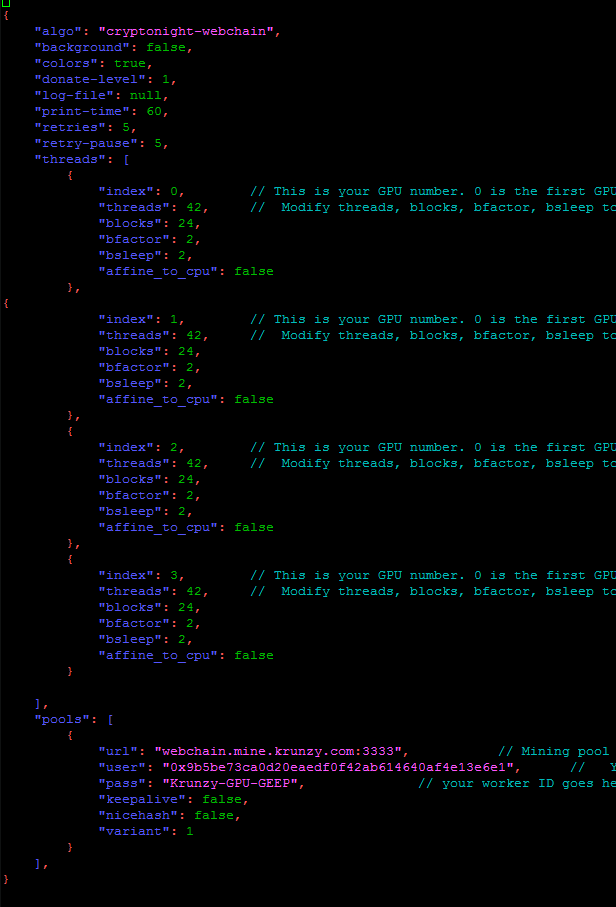

{

"algo": "cryptonight", // cryptonight (default), cryptonight-lite or cryptonight-heavy

"aesni": 0, // selection of AES-NI mode (0 auto, 1 on, 2 off)

"threads": 2, // number of miner threads (not set or 0 enables automatic selection of optimal thread count)

"multihash-factor": 3, // number of hash blocks to process at a time (not set or 0 enables automatic selection of optimal number of hash $

"multihash-thread-mask" : null, // for multihash-factors>0 only, limits multihash to given threads (mask), mask "0x3" means run multihash$

"pow-variant" : "xfh", // specificy the PoW variat to use: -> auto (default), 0 (v0), 1 (v1, aka monerov7, aeonv7), ipbc (tube), alloy, x$

// for further help see: https://github.com/Bendr0id/xmrigCC/wiki/Coin-configurations

"background": false, // true to run the miner in the background (Windows only, for *nix plase use screen/tmux or systemd service instead)

"colors": true, // false to disable colored output

"cpu-affinity": null, // set process affinity to CPU core(s), mask "0x3" for cores 0 and 1

"cpu-priority": null, // set process priority (0 idle, 2 normal to 5 highest)

"donate-level": 1, // donate level, mininum 1%

"log-file": null, // log all output to a file, example: "c:/some/path/xmrig.log"

"max-cpu-usage": 100, // maximum CPU usage for automatic mode, usually limiting factor is CPU cache not this option.

"print-time": 60, // print hashrate report every N seconds

"retries": 5, // number of times to retry before switch to backup server

"retry-pause": 5, // time to pause between retries

"safe": false, // true to safe adjust threads and av settings for current CPU

"syslog": false, // use system log for output messages

"pools": [

{

"url": "swap.mine.krunzy.com:2222", // URL of mining server

"user": "fh3n1xT9NNJJFPZVNcR8Hr3b4Aybm2R31f7v9NeDbw2tMMgZqzK8hRm1hgPdzRD4W4ZSpJrT8gy5o6D6ksno9VYY1iQFSeTGt.5000", // username for$

"pass": "swARMp", // password for mining server

"use-tls" : false, // enable tls for pool communication (need pool support)

"keepalive": true, // send keepalived for prevent timeout (need pool support)

"nicehash": false // enable nicehash/xmrig-proxy support

}

],

"cc-client": null

}

To run the miner from the xmrigCC folder enter the following command:

./xmrigDaemon -c=config.json

To auto run the miner on machine startup add the following to /etc/rc.local (adjust for your paths):

#!/bin/sh -e

cd /home/ubuntu/xmrigCC

./xmrigDaemon -c=config.json

#exit 0

Testing

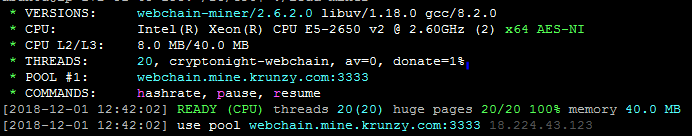

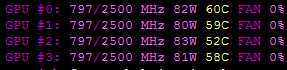

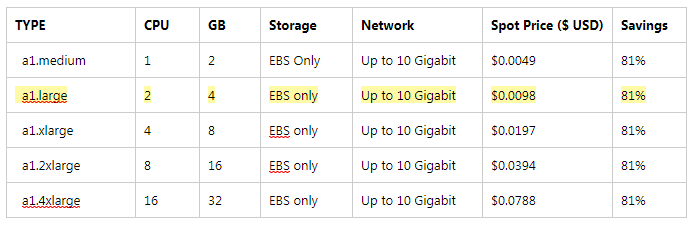

The testing was performed on AWS A1 instances. The pools used were Krunzy.com Webchain and Swap pools in the same region (US) the tests were performed. Both miners were run using the lowest difficulty the pools offered. Webchain miner ran at 1000 variable difficulty. Swap miner ran at 5000 static difficulty. Testing ran for 60 minutes and were monitored on the miner and the pool. The testing ran across the A1 series and focused on optimizing the hash rate.

Results

The testing started with the smallest A1 instance, a1.medium (1 CPU, 2GB):

Webchain: miner=16 H/s, pool=14 H/s

Swap: miner=33 H/s, pool=32 H/s

The testing jumped to the largest A1 instance tested, a1.xlarge (4 CPU, 8GB):

Webchain: miner=44 H/s, pool=36 H/s

Swap: 80 H/s, pool=100 H/s

Testing and optimization primarily on a1.large (2 CPU, 4GB):

Webchain: miner=30H/s, pool=26H/s

Swap: 100 H/s, pool=125 H/

a1.large optimized (see optimized configs for each miner above in setup):

Webchain: using low_power_mode: 2 and 3 threads

miner=38 H/s, pool=32 H/s

"threads":

[

{"low_power_mode": 2, "affine_to_cpu": 0},

{"low_power_mode": 2, "affine_to_cpu": 1},

{"low_power_mode": 2, "affine_to_cpu": 2},

]

Swap: using 2 threads and multihash-factor 3

miner=100 H/s, pool=125 H/

"threads": 2,

"multihash-factor": 3,

a1.large optimized at scale for 60 minutes:

To create scale, a launch template was created with an auto starting miner and used as part of an Auto Scaling Group (ASG).

Webchain @ 100 instances: 2250 H/s at pool

Swap @ 20 instances: 1280 H/s

Spot costs at the time of testing for US-EAST-2 (Ohio, USA):

Hash cost based on a1.large @ $0.01/hour (rounding up)

Webchain 32 H/s for 1 hour = 115 kH/h

Swap 100 H/s for 1 hour = 360 kH/h

Conclusion

It was encouraging to find we could compile ARM versions of both the webchain-miner for Webchain and xmrigCC for Swap. Testing showed Swap ARM has 3 times more hashing power than the Webchain ARM. Not surprising based on the underlying PoW algorithms. Webchain is closer to CryptoNight v7 where Swap was engineered to have lighter PoW with CryptoNight SuperFast. So, we proved we could compile to an ARM version of a miner and produce a hash.

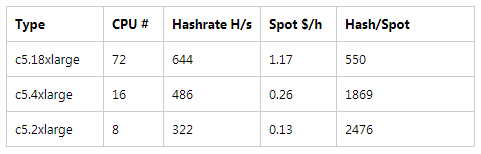

The hash rate was not too bad. An AMD Threadripper thread will provide ~100 H/s for Webchain and ~185 H/s for Swap conservatively. Webchain ARM is about a third of the hash than the AMD CPU whereas Swap is slightly over half the hash.

ARMs are inexpensive at pennies an hour. Because of this you could keep a small hash on a pool for a day for about a quarter ($0.25). You could also provide some load and increase to 100 times for an hour for 1 dollar. For pool testing this is an inexpensive way to create load especially if simulating multiple users/ addresses.

In the end, ARM miners are available and offer a cost effective way to run some smaller hash tests. In AWS, ARM instances can scale to 100 instances using spot pricing for pennies an instance in order to create load. Currently, this is not a profitable way to mine cryptocurrency.